In recent times, breast cancer has become the most common type of cancer affecting women worldwide accounting for 25% of all cancer cases and affected 3.5 million people in 2017-18. Early diagnosis in these cases significantly increases the chances of survival. The key challenge in cancer detection is how to classify tumours into malignant or benign. Research indicates that most experienced physicians can diagnose cancer with 79% accuracy while using artificial intelligence based diagnosis, it is possible to achieve 91% accuracy.

NASSCOM CoE-IoT & AI & Eqounix Tech Lab organised a hands-on session on 28th September 2019, 10am onwards at the CoE Gurugram center on Breast Cancer detection. This was a paid session and there were over 50 attendees, consisting of basic & advanced developers from enterprises like Sopra Steria, United Health Group, TCS, HCL, Inventum Technologies, Publicis Sapient, Globallogic etc and startups like Attentive AI, Empass, Anasakta Labs, NEbulARC, SirionLabs & Vision Networkz. Students from ICGEB/JMI, Indira Gandhi Delhi Technical University etc also participated in the session.

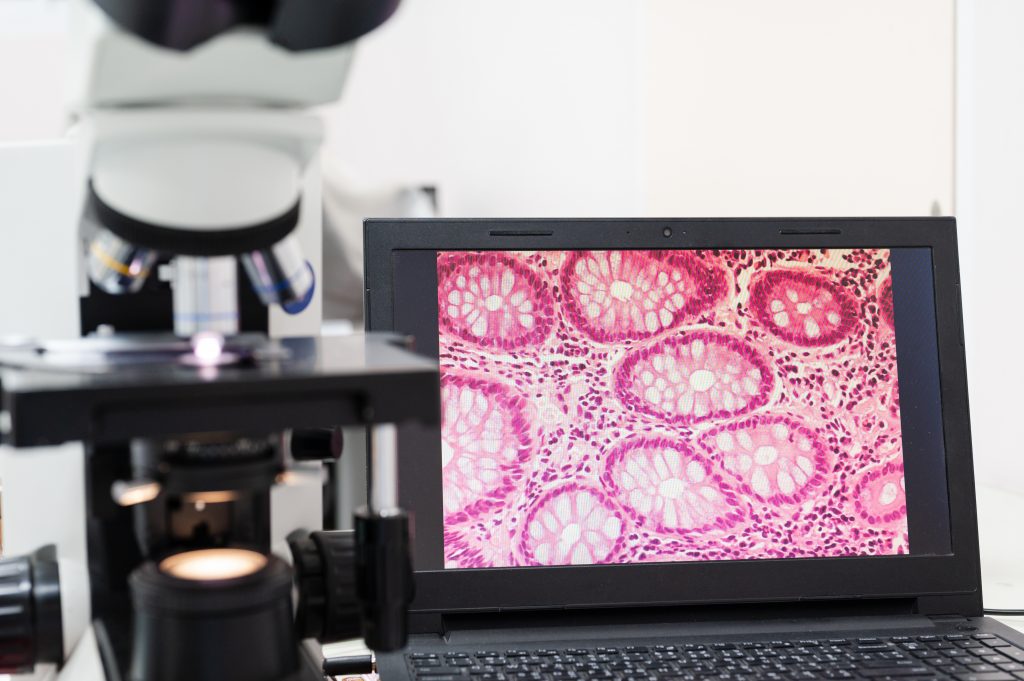

The session first half focussed on Wisconsin Diagnostic Cancer (WDBC) & Invasive Ductal Carcinoma (IDC) datasets insights, Visualization of Dataset, feature selection and why they are chosen and how the physical parameters are translated into a dataset. In the second part, the focus was on Feature Selection and CNN, random forest based classification of cancer as malignant or benign followed by the optimised the deployment strategy & cost estimation

WISCONSIN DIAGNOSTIC BREAST CANCER (WDBC):

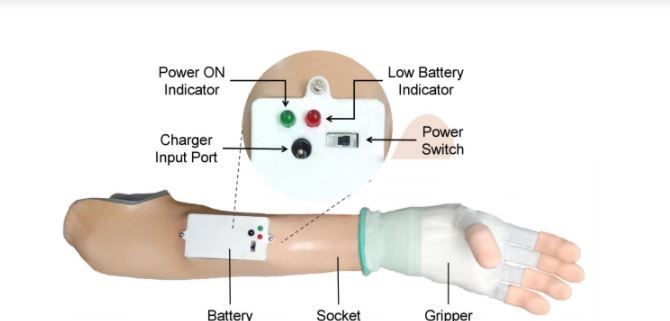

The samples consist of visually assessed nuclear features of Fine Needle Aspirates (FNAs) taken from patients. Attributes 3 to 11 were used to form a 9-dimensional vector which was used to obtain a neural network to discriminate between benign and malignant samples. Cross-validation was used to project the accuracy of the diagnostic algorithm.